Stanford Axes Alpaca AI, Facebook LLama Copy, Over Cost, Errors

Researchers at Stanford took down their Alpaca AI, a short-lived chatbot that harnessed Meta’s LLaMA AI. Alpaca went public last week, and came down shortly as costs went up and safety risks became more apparent.

So far, researchers can't get AI to behave. The Stanford model, based on Meta's LLaMA AI, went down as fast as it went up

October 1, 2022: Volume XC, No. 19 by Kirkus Reviews - Issuu

Summary of the State of AI Report 2023 - by Michael Spencer

Stanford Axes Alpaca AI, Facebook LLama Copy, Over Cost, Errors

RLHF 201 - with Nathan Lambert of AI2 and Interconnects

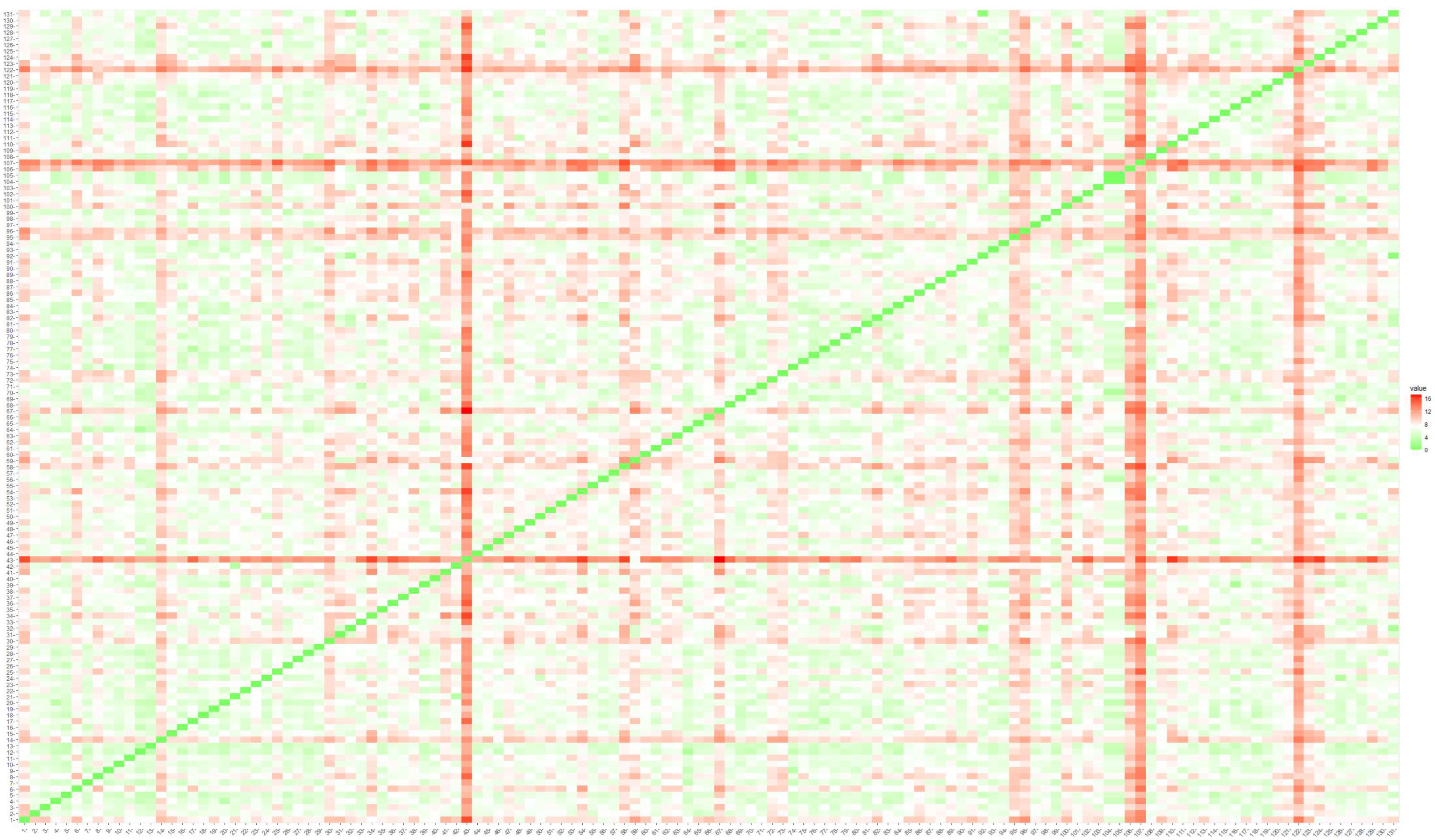

anton on X: Anyone try reproducing stanford's LLaMA Alpaca? I haven't seen loss curves like this. I reproduced it against another decoder model My attempt is on the left (it doesn't use

Game Changing AI from Stanford - Alpaca

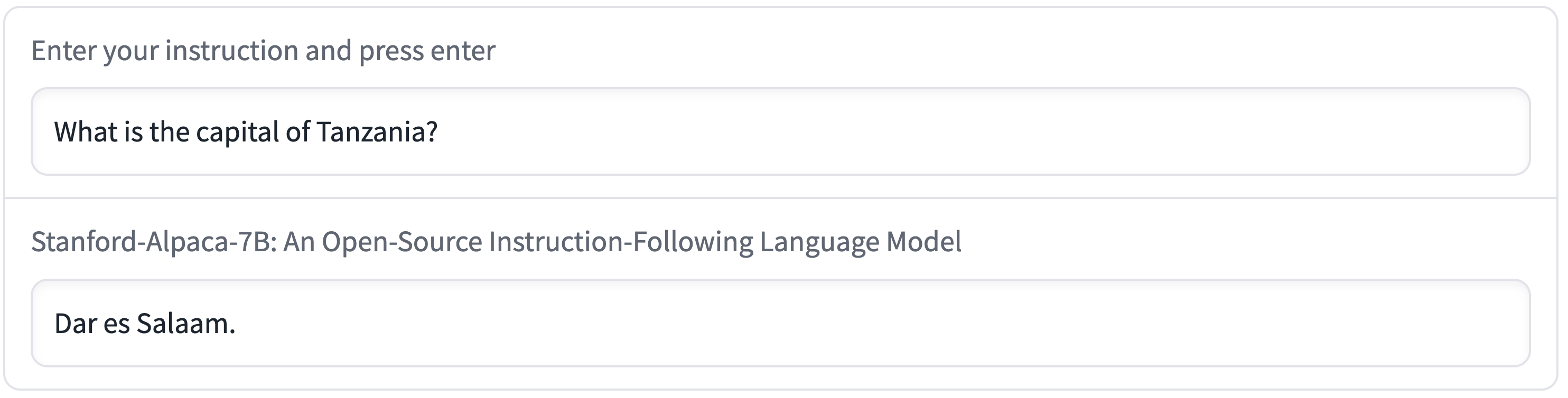

Run Stanford Alpaca 7B LLAMA Instruction Finetuned Model on Local System, Dalai

Multimodal LM roundup: Unified IO 2, inputs and outputs, Gemini, LLaVA-RLHF, and RLHF questions

Do By Friday

How does Alpaca follow your instructions? Stanford Researchers Discover How the Alpaca AI Model Uses Causal Models and Interpretable Variables for Numerical Reasoning - MarkTechPost

Information, Free Full-Text

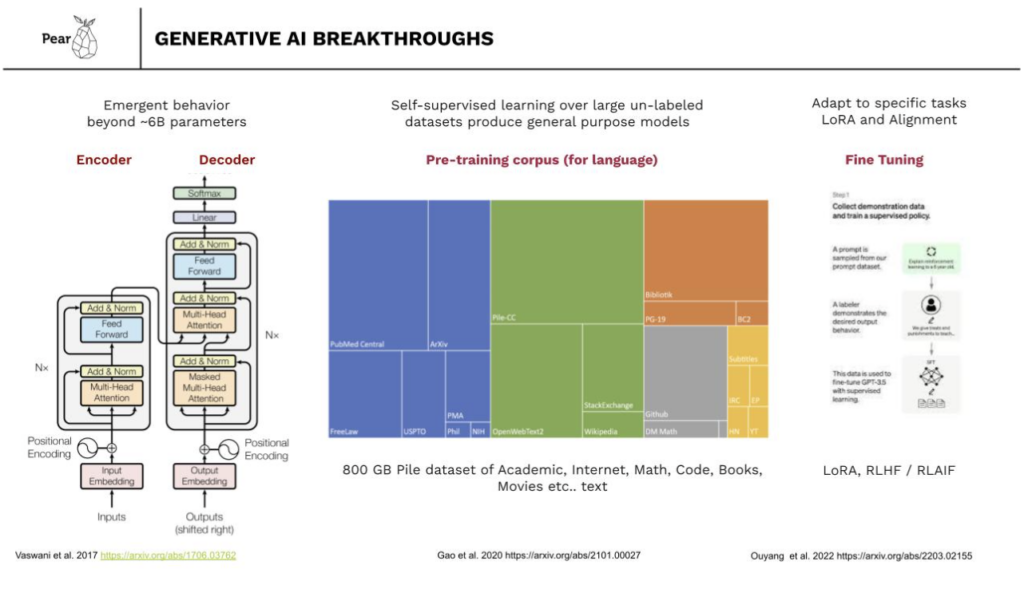

Product & Engineering Archives - Pear VC

Stanford Researchers Take Down Alpaca AI Due to 'Hallucinations' and Rising Costs

Stanford CRFM

New LLM Foundation Models - by Sebastian Raschka, PhD